Moveworks vs Pipeline AI

Pipeline AI has been discontinued. This comparison is kept for historical reference.

Both tools are evenly matched across our comparison criteria.

Rating

Neither tool has been rated yet.

Popularity

Both tools have similar popularity.

Pricing

Both tools have paid pricing.

Community Reviews

Both tools have a similar number of reviews.

| Criteria | Moveworks | Pipeline AI |

|---|---|---|

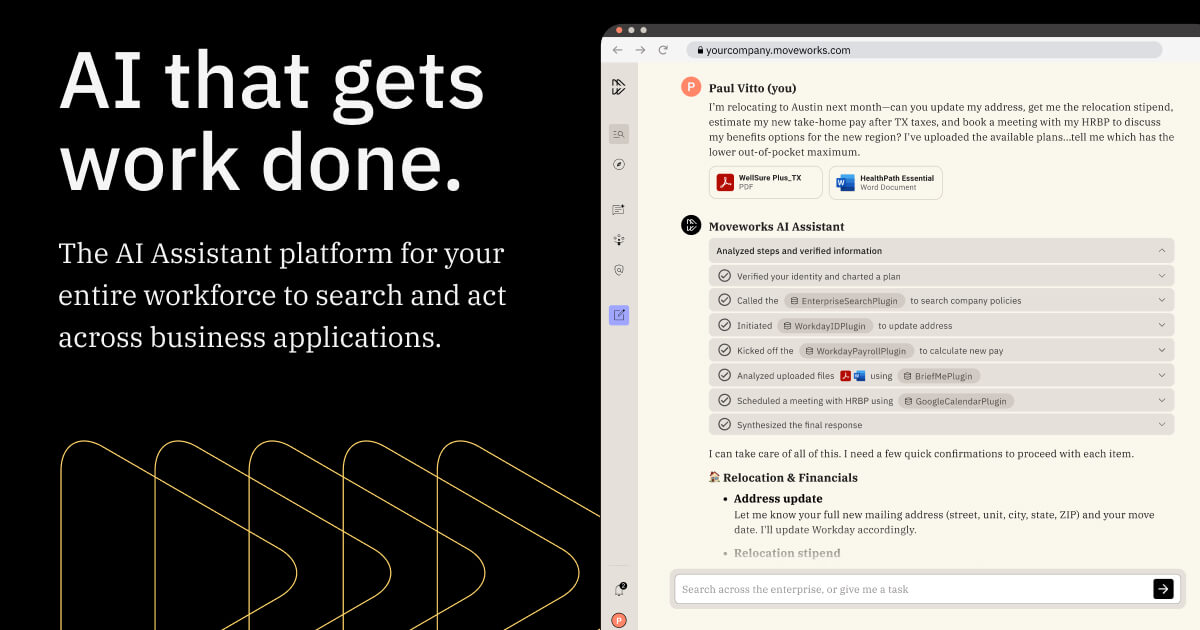

| Description | Moveworks is an advanced AI-powered employee experience platform designed to revolutionize internal support across large enterprises. Leveraging sophisticated large language models, it intelligently resolves employee issues and automates complex workflows spanning IT, HR, Finance, and other critical departments. The platform significantly enhances workplace productivity and satisfaction by providing instant, accurate, and personalized assistance, reducing the burden on support teams and empowering employees with self-service capabilities. | Pipeline AI is a specialized serverless GPU inference platform engineered for machine learning engineers and data scientists. It provides a robust, scalable, and cost-efficient solution for deploying and managing AI models, including large language models (LLMs), by abstracting the complexities of underlying infrastructure. The platform significantly accelerates the time-to-market for AI applications, offering optimized performance with features like lightning-fast cold starts and intelligent auto-scaling, making it ideal for real-time inference workloads. |

| What It Does | Moveworks employs conversational AI to understand and act on employee requests submitted via various channels like Slack, Teams, email, or web portals. It integrates deeply with existing enterprise systems (e.g., ServiceNow, Workday) to automate resolutions, retrieve relevant information, and complete tasks without human intervention. By using LLMs, it provides natural language interaction, ensuring employees receive quick and precise answers to their queries. | Pipeline AI enables users to deploy their machine learning models, including complex LLMs, onto serverless GPU infrastructure with minimal effort. It automatically handles resource provisioning, scaling (including scale-to-zero), load balancing, and performance optimizations like cold start reduction. The platform serves as a crucial MLOps layer, allowing developers to focus on model development rather than infrastructure management, through intuitive APIs and SDKs. |

| Pricing Type | paid | paid |

| Pricing Model | paid | paid |

| Pricing Plans | Enterprise Plan: Contact for Pricing | Custom Enterprise Pricing: Contact for pricing |

| Rating | N/A | N/A |

| Reviews | N/A | N/A |

| Views | 8 | 8 |

| Verified | No | No |

| Key Features | Conversational AI Interface, Intelligent Workflow Automation, Proactive Communications, Knowledge Management & Search, Deep Enterprise Integrations | Serverless GPU Infrastructure, Sub-Second Cold Starts, Intelligent Auto-Scaling, LLM Optimization, Framework Agnostic Deployment |

| Value Propositions | Reduced Operational Costs, Enhanced Employee Productivity, Improved Employee Satisfaction | Accelerated AI Deployment, Significant Cost Savings, Effortless Scalability |

| Use Cases | IT Helpdesk Automation, HR Query Resolution, Employee Onboarding & Offboarding, Internal Communications & Alerts, Facilities & Workplace Requests | Deploying Custom LLMs, Real-time Computer Vision, NLP Application Backends, AI-Powered Recommendation Engines, A/B Testing ML Models |

| Target Audience | Moveworks is primarily designed for large enterprises and mid-market companies aiming to optimize their internal support functions. Key beneficiaries include IT leaders, HR executives, internal communications teams, and operations managers seeking to reduce support ticket volumes, enhance employee self-service, and improve overall operational efficiency. | This tool is primarily designed for machine learning engineers, data scientists, and MLOps teams who need to deploy and manage AI models in production environments. It caters to developers building AI-powered applications that require high performance, scalability, and cost-efficiency for their inference workloads, particularly those working with large language models or real-time AI services. |

| Categories | Text Generation, Business & Productivity, Data Analysis, Automation | Code & Development, Automation, Data Processing |

| Tags | employee experience, internal support, it automation, hr automation, conversational ai, large language models, workflow automation, enterprise solutions, self-service, ai copilot | serverless, gpu inference, mlops, llm deployment, model serving, ai infrastructure, auto-scaling, deep learning, machine learning, ai api |

| GitHub Stars | N/A | N/A |

| Last Updated | N/A | N/A |

| Website | www.moveworks.com | www.pipeline.ai |

| GitHub | N/A | N/A |

Who is Moveworks best for?

Moveworks is primarily designed for large enterprises and mid-market companies aiming to optimize their internal support functions. Key beneficiaries include IT leaders, HR executives, internal communications teams, and operations managers seeking to reduce support ticket volumes, enhance employee self-service, and improve overall operational efficiency.

Who is Pipeline AI best for?

This tool is primarily designed for machine learning engineers, data scientists, and MLOps teams who need to deploy and manage AI models in production environments. It caters to developers building AI-powered applications that require high performance, scalability, and cost-efficiency for their inference workloads, particularly those working with large language models or real-time AI services.