Vocera

Last updated:

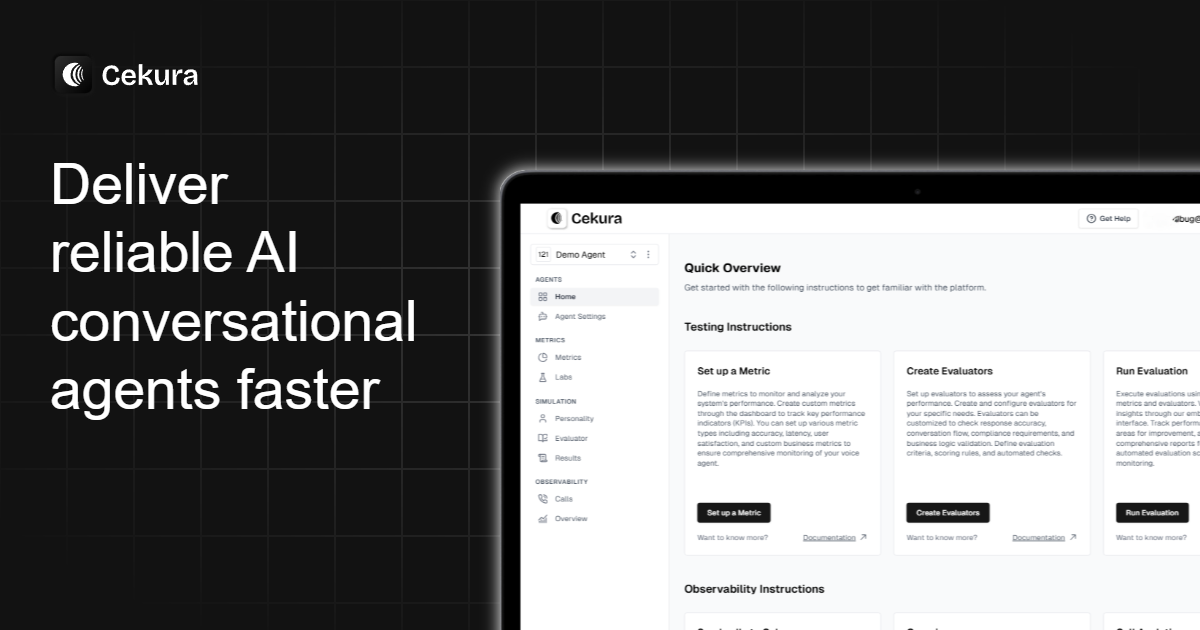

Vocera, by Cekura, is an advanced AI tool designed for comprehensive testing and observability of AI voice agents and conversational AI systems. It empowers businesses to ensure the reliability, performance, and optimal user experience of their virtual assistants, chatbots, and voicebots. By providing tools for automated testing, real-time monitoring, and in-depth debugging, Vocera helps prevent operational issues and enhance customer interactions in AI-driven communication channels.

What It Does

Vocera provides a robust platform for evaluating and monitoring conversational AI systems throughout their lifecycle. It simulates user interactions to perform functional, regression, and load testing, while also offering real-time observability into live agent performance. This allows teams to proactively identify, diagnose, and resolve issues related to intent recognition, response accuracy, and system latency.

Pricing

Pricing Plans

Custom pricing for enterprise-grade voice AI testing and observability solutions, tailored to specific business needs and scale.

- Tailored solutions

- Enterprise support

- Scalable testing

- Advanced analytics

Core Value Propositions

Enhanced AI Reliability

Ensure your conversational AI agents consistently perform as expected, minimizing errors and improving user trust. This prevents negative customer experiences.

Proactive Issue Resolution

Detect and diagnose problems in real-time before they significantly impact users, allowing for swift corrective action. This reduces operational downtime and support costs.

Superior User Experience

Optimize AI interactions based on detailed performance and sentiment analysis, leading to higher customer satisfaction and engagement. This directly impacts business outcomes.

Reduced Development & Ops Costs

Automate testing and streamline debugging processes, significantly cutting down on manual effort and time spent on maintenance. This frees up resources for innovation.

Use Cases

Pre-deployment AI Testing

Rigorously test new conversational AI models or feature updates for functional correctness and performance before deploying to production. This prevents regressions and ensures quality.

Live Voicebot Monitoring

Continuously monitor the performance and user experience of customer-facing voicebots in real-time, detecting anomalies and errors instantly. This ensures uninterrupted service.

Debugging Complex AI Flows

Analyze detailed conversation logs to identify and debug issues in multi-turn conversational AI applications, pinpointing specific points of failure. This accelerates problem resolution.

Performance SLA Validation

Validate that AI voice agents consistently meet defined service level agreements for response time, accuracy, and availability. This ensures contractual compliance and reliability.

User Experience Optimization

Utilize sentiment and intent accuracy data to iteratively improve conversational design and user satisfaction for virtual assistants. This enhances overall customer interaction quality.

Technical Features & Integration

Automated AI Testing

Conduct functional, regression, and load tests on conversational AI systems to ensure reliability and performance under various conditions. This accelerates development cycles and reduces manual effort.

Real-time Observability

Monitor live voice agents and chatbots with real-time dashboards, detecting anomalies and performance degradations as they happen. This enables proactive issue resolution and minimizes downtime.

Root Cause Analysis

Access detailed conversation logs, error traces, and performance metrics to quickly pinpoint the exact cause of failures or poor user experiences. This streamlines debugging processes.

User Experience Evaluation

Measure key UX metrics such as intent recognition accuracy, sentiment analysis, and response times to understand and improve user satisfaction. This ensures a positive interaction with AI agents.

Integrations with AI Platforms

Seamlessly connect with popular conversational AI platforms like Google Dialogflow, Amazon Lex, and custom LLMs. This allows for flexible deployment into existing tech stacks.

Performance Benchmarking

Establish performance baselines and track improvements over time, ensuring your AI agents consistently meet service level agreements. This drives continuous optimization.

Target Audience

Vocera is designed for AI/ML teams, QA engineers, product managers, and developers responsible for building, deploying, and maintaining conversational AI systems. It's particularly beneficial for enterprises and businesses heavily relying on AI voice agents, chatbots, and virtual assistants for customer service, sales, or internal operations.

Frequently Asked Questions

Vocera is a paid tool. Available plans include: Custom.

Vocera provides a robust platform for evaluating and monitoring conversational AI systems throughout their lifecycle. It simulates user interactions to perform functional, regression, and load testing, while also offering real-time observability into live agent performance. This allows teams to proactively identify, diagnose, and resolve issues related to intent recognition, response accuracy, and system latency.

Key features of Vocera include: Automated AI Testing: Conduct functional, regression, and load tests on conversational AI systems to ensure reliability and performance under various conditions. This accelerates development cycles and reduces manual effort.. Real-time Observability: Monitor live voice agents and chatbots with real-time dashboards, detecting anomalies and performance degradations as they happen. This enables proactive issue resolution and minimizes downtime.. Root Cause Analysis: Access detailed conversation logs, error traces, and performance metrics to quickly pinpoint the exact cause of failures or poor user experiences. This streamlines debugging processes.. User Experience Evaluation: Measure key UX metrics such as intent recognition accuracy, sentiment analysis, and response times to understand and improve user satisfaction. This ensures a positive interaction with AI agents.. Integrations with AI Platforms: Seamlessly connect with popular conversational AI platforms like Google Dialogflow, Amazon Lex, and custom LLMs. This allows for flexible deployment into existing tech stacks.. Performance Benchmarking: Establish performance baselines and track improvements over time, ensuring your AI agents consistently meet service level agreements. This drives continuous optimization..

Vocera is best suited for Vocera is designed for AI/ML teams, QA engineers, product managers, and developers responsible for building, deploying, and maintaining conversational AI systems. It's particularly beneficial for enterprises and businesses heavily relying on AI voice agents, chatbots, and virtual assistants for customer service, sales, or internal operations..

Ensure your conversational AI agents consistently perform as expected, minimizing errors and improving user trust. This prevents negative customer experiences.

Detect and diagnose problems in real-time before they significantly impact users, allowing for swift corrective action. This reduces operational downtime and support costs.

Optimize AI interactions based on detailed performance and sentiment analysis, leading to higher customer satisfaction and engagement. This directly impacts business outcomes.

Automate testing and streamline debugging processes, significantly cutting down on manual effort and time spent on maintenance. This frees up resources for innovation.

Rigorously test new conversational AI models or feature updates for functional correctness and performance before deploying to production. This prevents regressions and ensures quality.

Continuously monitor the performance and user experience of customer-facing voicebots in real-time, detecting anomalies and errors instantly. This ensures uninterrupted service.

Analyze detailed conversation logs to identify and debug issues in multi-turn conversational AI applications, pinpointing specific points of failure. This accelerates problem resolution.

Validate that AI voice agents consistently meet defined service level agreements for response time, accuracy, and availability. This ensures contractual compliance and reliability.

Utilize sentiment and intent accuracy data to iteratively improve conversational design and user satisfaction for virtual assistants. This enhances overall customer interaction quality.

Get new AI tools weekly

Join readers discovering the best AI tools every week.