Trustworthy Language Model Tlm

Last updated:

Cleanlab Studio, through its pioneering data-centric AI approach, empowers enterprises to develop Trustworthy Language Models (TLMs). It provides a robust foundation for building reliable and safe generative AI applications by systematically identifying and mitigating issues like inaccuracies, biases, and hallucinations within LLM outputs and their training data. This ensures GenAI deployments meet high standards of dependability and reduce operational risks for business-critical use cases, distinguishing itself by tackling AI trustworthiness at the data source.

What It Does

Cleanlab Studio, the platform enabling TLM, analyzes and cleans the data used to train and fine-tune large language models (LLMs), as well as the prompts and outputs generated by them. It leverages state-of-the-art algorithms to automatically detect and correct errors, biases, and inconsistencies in text data, thereby improving the inherent reliability, safety, and factual accuracy of the resulting language models for enterprise applications.

Pricing

Pricing Plans

Tailored for growing teams and projects requiring robust data quality and trustworthiness for their AI applications. Requires a demo for specific pricing.

- Data-centric AI platform

- Error detection

- Bias mitigation

- Automated data cleaning

Designed for large organizations with complex AI deployments, demanding enterprise-grade security, scalability, and comprehensive support for trustworthy AI at scale.

- All Growth features

- Advanced security

- Dedicated support

- Custom integrations

- Scalability

Core Value Propositions

Enhanced AI Reliability

Ensures generative AI applications consistently produce accurate and dependable outputs, reducing errors and improving user trust in AI-driven solutions.

Reduced Operational Risks

Minimizes the risks associated with deploying inaccurate, biased, or harmful AI content, protecting brand reputation and ensuring regulatory compliance.

Accelerated GenAI Deployment

Provides the tools and methodologies to quickly build and deploy trustworthy AI systems, overcoming common hurdles related to AI quality and safety.

Proactive Bias Mitigation

Identifies and corrects biases at the data level, fostering more equitable and fair AI outcomes from the ground up, rather than post-hoc adjustments.

Use Cases

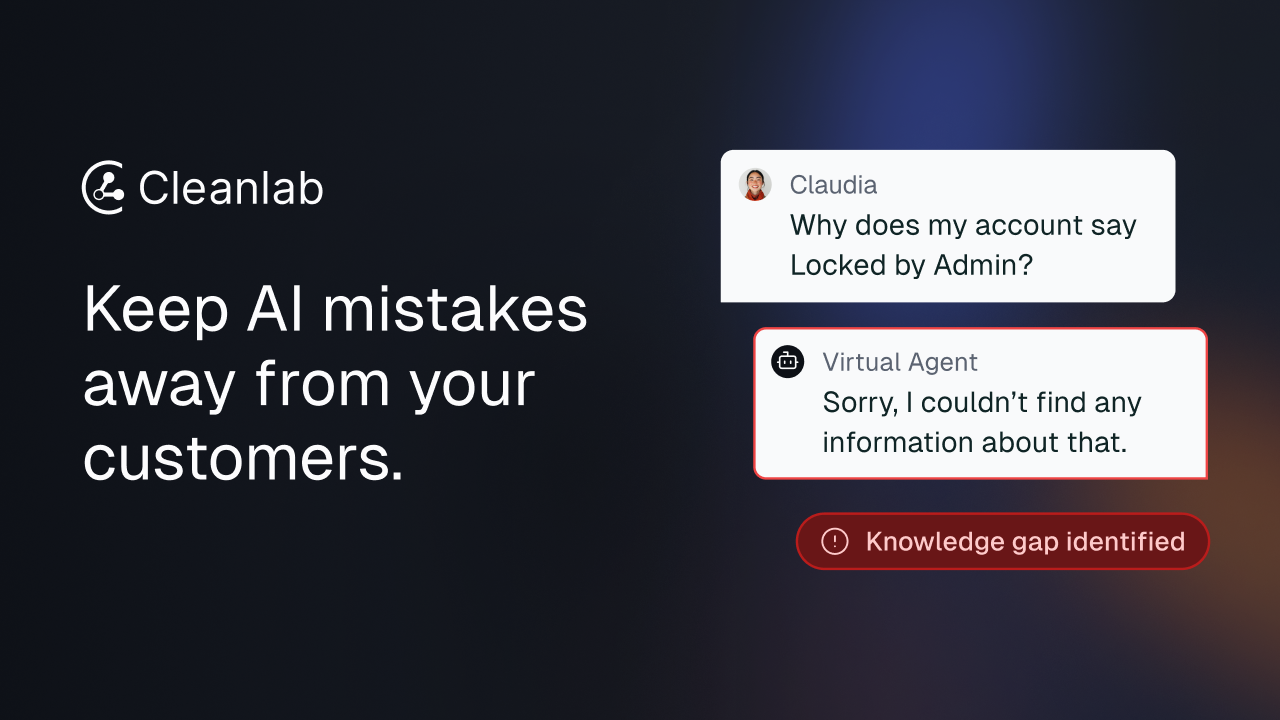

Reliable Customer Service Chatbots

Ensuring AI-powered chatbots provide consistently accurate, unbiased, and helpful information to customers, reducing misinformation and improving satisfaction.

Error-Free Content Generation

Developing generative AI systems for marketing, technical documentation, or journalism that produce factually accurate and high-quality content with minimal human oversight.

Compliant AI in Regulated Industries

Building and deploying AI assistants and applications in finance, healthcare, or legal sectors that adhere strictly to regulatory requirements and ethical guidelines.

Fair AI Decision-Making

Mitigating bias in AI systems used for sensitive tasks like loan applications, hiring, or medical diagnostics to ensure equitable and just outcomes.

Validated LLM Outputs for Analysis

Providing a framework to validate and ensure the trustworthiness of LLM-generated summaries, analyses, or research for critical business or academic use.

Secure Internal Knowledge Systems

Improving the accuracy and reliability of internal knowledge management systems powered by LLMs, ensuring employees access correct and up-to-date information.

Technical Features & Integration

Automated Data Error Detection

Automatically identifies and flags issues such as mislabeled data, outliers, and duplicates within vast text datasets, crucial for improving LLM training quality.

Bias Identification & Mitigation

Pinpoints and helps correct inherent biases present in training data, which might otherwise lead to unfair, discriminatory, or skewed outputs from generative AI models.

Hallucination Reduction

Enhances the factual accuracy and reliability of LLM generations by ensuring that models are trained on cleaner, more trustworthy data, directly combating AI hallucinations.

Prompt Engineering & Output Validation

Provides tools to systematically evaluate and refine prompts while validating the trustworthiness and quality of generated responses, ensuring alignment with desired outcomes.

Continuous Model Monitoring

Offers capabilities to continuously monitor model performance and detect data drift, helping maintain the trustworthiness and quality of TLMs over their lifecycle.

No-Code/Low-Code Interface

Makes advanced data cleaning, error detection, and model improvement processes accessible to a broader range of users, reducing the need for specialized coding expertise.

Target Audience

This tool is designed for enterprises, AI/ML engineers, data scientists, and product managers focused on developing and deploying reliable, safe, and ethical generative AI applications in production. It also benefits compliance officers and risk management teams in regulated industries such as finance, healthcare, and legal, where AI trustworthiness is paramount.

Frequently Asked Questions

Trustworthy Language Model Tlm is a paid tool. Available plans include: Growth, Enterprise.

Cleanlab Studio, the platform enabling TLM, analyzes and cleans the data used to train and fine-tune large language models (LLMs), as well as the prompts and outputs generated by them. It leverages state-of-the-art algorithms to automatically detect and correct errors, biases, and inconsistencies in text data, thereby improving the inherent reliability, safety, and factual accuracy of the resulting language models for enterprise applications.

Key features of Trustworthy Language Model Tlm include: Automated Data Error Detection: Automatically identifies and flags issues such as mislabeled data, outliers, and duplicates within vast text datasets, crucial for improving LLM training quality.. Bias Identification & Mitigation: Pinpoints and helps correct inherent biases present in training data, which might otherwise lead to unfair, discriminatory, or skewed outputs from generative AI models.. Hallucination Reduction: Enhances the factual accuracy and reliability of LLM generations by ensuring that models are trained on cleaner, more trustworthy data, directly combating AI hallucinations.. Prompt Engineering & Output Validation: Provides tools to systematically evaluate and refine prompts while validating the trustworthiness and quality of generated responses, ensuring alignment with desired outcomes.. Continuous Model Monitoring: Offers capabilities to continuously monitor model performance and detect data drift, helping maintain the trustworthiness and quality of TLMs over their lifecycle.. No-Code/Low-Code Interface: Makes advanced data cleaning, error detection, and model improvement processes accessible to a broader range of users, reducing the need for specialized coding expertise..

Trustworthy Language Model Tlm is best suited for This tool is designed for enterprises, AI/ML engineers, data scientists, and product managers focused on developing and deploying reliable, safe, and ethical generative AI applications in production. It also benefits compliance officers and risk management teams in regulated industries such as finance, healthcare, and legal, where AI trustworthiness is paramount..

Ensures generative AI applications consistently produce accurate and dependable outputs, reducing errors and improving user trust in AI-driven solutions.

Minimizes the risks associated with deploying inaccurate, biased, or harmful AI content, protecting brand reputation and ensuring regulatory compliance.

Provides the tools and methodologies to quickly build and deploy trustworthy AI systems, overcoming common hurdles related to AI quality and safety.

Identifies and corrects biases at the data level, fostering more equitable and fair AI outcomes from the ground up, rather than post-hoc adjustments.

Ensuring AI-powered chatbots provide consistently accurate, unbiased, and helpful information to customers, reducing misinformation and improving satisfaction.

Developing generative AI systems for marketing, technical documentation, or journalism that produce factually accurate and high-quality content with minimal human oversight.

Building and deploying AI assistants and applications in finance, healthcare, or legal sectors that adhere strictly to regulatory requirements and ethical guidelines.

Mitigating bias in AI systems used for sensitive tasks like loan applications, hiring, or medical diagnostics to ensure equitable and just outcomes.

Providing a framework to validate and ensure the trustworthiness of LLM-generated summaries, analyses, or research for critical business or academic use.

Improving the accuracy and reliability of internal knowledge management systems powered by LLMs, ensuring employees access correct and up-to-date information.

Get new AI tools weekly

Join readers discovering the best AI tools every week.