Synexa AI

Last updated:

Synexa AI is an advanced MLOps platform meticulously engineered to streamline the transition of machine learning models from development to production. It offers a robust, scalable, and cost-efficient GPU infrastructure, enabling rapid model deployment with minimal code. By abstracting away complex infrastructure management and providing integrated tools for monitoring and scaling, Synexa AI empowers developers and organizations to focus on innovation. This platform significantly accelerates AI adoption across various applications, making advanced AI accessible and manageable for diverse use cases.

What It Does

Synexa AI simplifies AI model deployment by providing a serverless MLOps platform that serves models on scalable GPU infrastructure. Users can deploy models from any major ML framework using a simple SDK, which then automatically handles auto-scaling, monitoring, and secure API endpoint creation. This approach abstracts away complex operational overhead, allowing teams to focus solely on model development and refinement.

Key Features

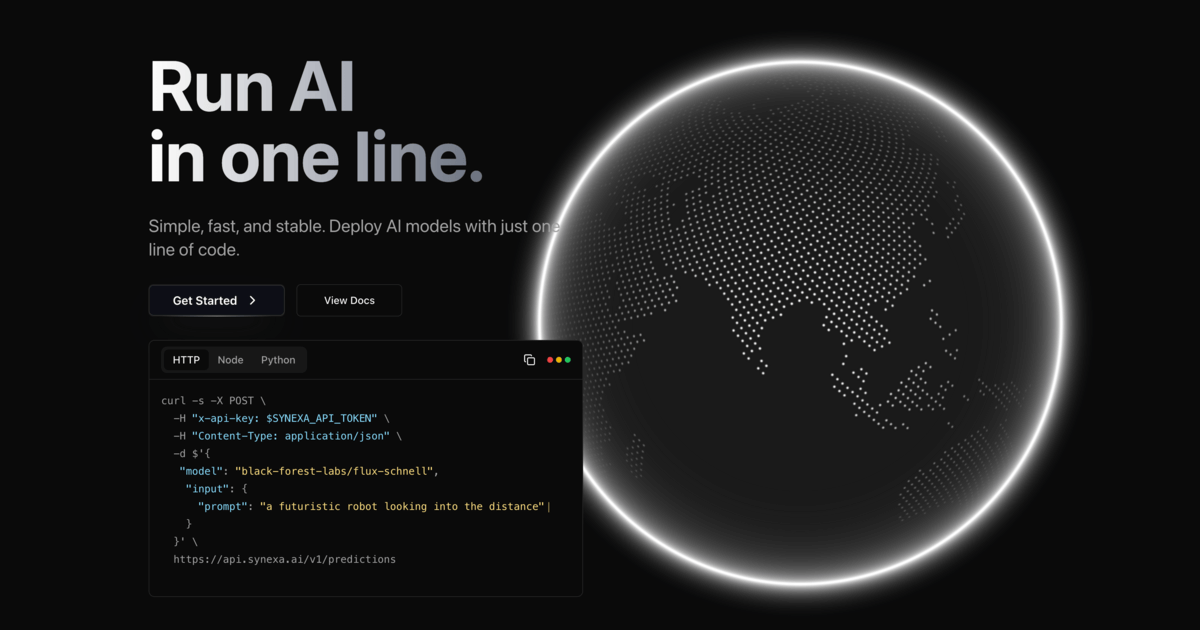

The platform boasts seamless model deployment through a single line of code, leveraging a cost-effective, auto-scaling GPU infrastructure for blazing-fast inference. It includes comprehensive real-time monitoring and logging for deep operational visibility, supports all major ML frameworks, and provides robust security and compliance features essential for production environments. Additionally, it offers flexible deployment options including private cloud or on-premises solutions.

Target Audience

This tool is ideal for machine learning engineers, data scientists, and MLOps teams seeking to accelerate their model deployment lifecycle and reduce operational burden. Organizations developing AI-powered products, especially those dealing with generative AI, computer vision, or natural language processing, will find its scalable and cost-effective infrastructure highly beneficial for production workloads.

Value Proposition

Synexa AI uniquely combines extreme ease of deployment via a single line of code with powerful, cost-optimized GPU infrastructure, drastically reducing the time and complexity typically associated with MLOps. It solves critical pain points of infrastructure management, dynamic scaling, and cost control, allowing teams to iterate faster and bring AI innovations to market more efficiently without sacrificing performance or reliability.

Use Cases

Synexa AI excels in scenarios requiring rapid and scalable deployment of machine learning models. This includes hosting large language models and diffusion models for generative AI applications, serving real-time computer vision models for immediate processing, and deploying scalable natural language processing microservices. It is also highly effective for dynamic recommendation engines, financial modeling for fraud detection, and creating robust, ML-powered APIs for seamless integration into other software systems.

Frequently Asked Questions

Synexa AI simplifies AI model deployment by providing a serverless MLOps platform that serves models on scalable GPU infrastructure. Users can deploy models from any major ML framework using a simple SDK, which then automatically handles auto-scaling, monitoring, and secure API endpoint creation. This approach abstracts away complex operational overhead, allowing teams to focus solely on model development and refinement.

Synexa AI is best suited for This tool is ideal for machine learning engineers, data scientists, and MLOps teams seeking to accelerate their model deployment lifecycle and reduce operational burden. Organizations developing AI-powered products, especially those dealing with generative AI, computer vision, or natural language processing, will find its scalable and cost-effective infrastructure highly beneficial for production workloads..