Lastmile AI

Last updated:

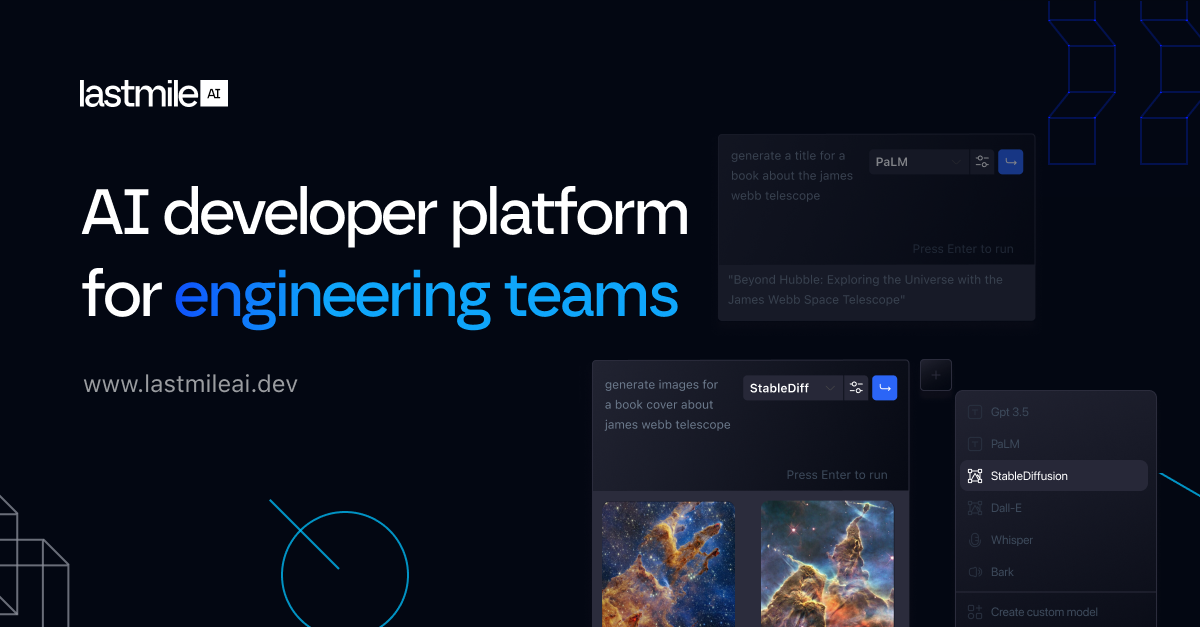

Lastmile AI is a comprehensive full-stack platform designed to elevate the reliability and performance of AI applications, particularly those powered by Large Language Models (LLMs). It provides robust, end-to-end tools for debugging, evaluating, and continuously improving AI systems throughout their entire development lifecycle. By offering deep observability, rigorous testing capabilities, and proactive monitoring, Lastmile AI empowers developers and ML teams to confidently build, deploy, and maintain high-quality, production-ready AI experiences. It streamlines the iterative process of AI development, ensuring applications consistently meet stringent performance and reliability standards, making it indispensable for teams transitioning AI prototypes into stable production environments.

What It Does

Lastmile AI provides a unified platform to manage the lifecycle of LLM-powered applications, from development to production. It captures every interaction, allowing for detailed tracing and debugging of AI system behavior. The platform enables rigorous evaluation through custom metrics and automated testing, and continuously monitors production performance to detect and alert on regressions or cost inefficiencies.

Pricing

Pricing Plans

Tailored solutions for enterprises with specific needs for scaling and securing their LLM applications in production.

- Full-stack LLM Observability

- Automated AI Evaluation

- Prompt and Model Debugging

- Continuous Production Monitoring

- Golden Dataset Management

- +4 more

Core Value Propositions

Accelerated AI Deployment

Streamlines the path from prototype to production by providing tools to debug, evaluate, and monitor AI applications efficiently, reducing time-to-market.

Enhanced LLM Reliability

Ensures AI applications consistently perform as expected through continuous monitoring, proactive issue detection, and rigorous evaluation processes.

Proactive Issue Detection

Monitors production performance and alerts on regressions, enabling teams to address problems before they impact users or incur significant costs.

Data-Driven AI Improvement

Provides comprehensive data and insights from every LLM interaction, empowering teams to make informed decisions for continuous model and prompt optimization.

Reduced Operational Costs

Helps optimize LLM usage and identify inefficiencies, leading to better resource allocation and lower operational expenses for AI applications.

Use Cases

Debugging LLM Chatbot Failures

Trace specific user interactions to pinpoint why a chatbot generated an irrelevant or incorrect response, identifying issues in prompt, context, or tool usage.

Evaluating New AI Models/Prompts

Rigorously test and compare the performance of new LLM models or prompt engineering strategies against golden datasets before deploying to production.

Monitoring Production AI Agents

Continuously track key performance indicators like latency, token usage, and error rates for AI agents in production, with alerts for anomalies.

A/B Testing LLM Configurations

Run controlled experiments to compare the effectiveness and cost-efficiency of different LLM providers, model versions, or prompt variations.

Ensuring RAG System Reliability

Gain full visibility into the retrieval and generation steps of RAG applications, ensuring accurate context grounding and preventing hallucinations.

Optimizing AI Application Costs

Monitor token usage and API calls across different LLM applications to identify cost-saving opportunities and optimize resource allocation.

Technical Features & Integration

End-to-End LLM Observability

Captures and visualizes every prompt, response, and internal step, providing deep insights into LLM application behavior and performance.

Automated AI Evaluation

Enables defining custom metrics and running automated evaluations against golden datasets to rigorously test and compare new models or prompt versions.

Prompt and Model Debugging

Facilitates identifying the root causes of AI application failures by allowing developers to trace issues, compare different runs, and manage prompt versions.

Continuous Production Monitoring

Tracks critical production metrics like latency, error rates, and costs, offering real-time insights and proactive alerting for performance regressions.

Golden Dataset Management

Helps create, manage, and utilize high-quality test cases to establish benchmarks and ensure consistent evaluation across development cycles.

Version Control for Prompts

Allows teams to track changes to prompts and models, enabling systematic experimentation and rollback capabilities for AI configurations.

Major LLM Integrations

Seamlessly integrates with popular LLM providers (e.g., OpenAI, Anthropic) and frameworks (e.g., LangChain, LlamaIndex), simplifying setup and adoption.

Custom Metric Definition

Empowers users to define and track custom evaluation metrics relevant to their specific application's success criteria and business goals.

Target Audience

This tool is primarily for ML engineers, AI developers, and data scientists responsible for building, deploying, and maintaining LLM-powered applications. It also benefits engineering leaders and product managers who need to ensure the reliability, performance, and quality of AI products in production environments. Teams looking to move AI prototypes confidently into production are the ideal users.

Frequently Asked Questions

Lastmile AI is a paid tool. Available plans include: Custom Enterprise.

Lastmile AI provides a unified platform to manage the lifecycle of LLM-powered applications, from development to production. It captures every interaction, allowing for detailed tracing and debugging of AI system behavior. The platform enables rigorous evaluation through custom metrics and automated testing, and continuously monitors production performance to detect and alert on regressions or cost inefficiencies.

Key features of Lastmile AI include: End-to-End LLM Observability: Captures and visualizes every prompt, response, and internal step, providing deep insights into LLM application behavior and performance.. Automated AI Evaluation: Enables defining custom metrics and running automated evaluations against golden datasets to rigorously test and compare new models or prompt versions.. Prompt and Model Debugging: Facilitates identifying the root causes of AI application failures by allowing developers to trace issues, compare different runs, and manage prompt versions.. Continuous Production Monitoring: Tracks critical production metrics like latency, error rates, and costs, offering real-time insights and proactive alerting for performance regressions.. Golden Dataset Management: Helps create, manage, and utilize high-quality test cases to establish benchmarks and ensure consistent evaluation across development cycles.. Version Control for Prompts: Allows teams to track changes to prompts and models, enabling systematic experimentation and rollback capabilities for AI configurations.. Major LLM Integrations: Seamlessly integrates with popular LLM providers (e.g., OpenAI, Anthropic) and frameworks (e.g., LangChain, LlamaIndex), simplifying setup and adoption.. Custom Metric Definition: Empowers users to define and track custom evaluation metrics relevant to their specific application's success criteria and business goals..

Lastmile AI is best suited for This tool is primarily for ML engineers, AI developers, and data scientists responsible for building, deploying, and maintaining LLM-powered applications. It also benefits engineering leaders and product managers who need to ensure the reliability, performance, and quality of AI products in production environments. Teams looking to move AI prototypes confidently into production are the ideal users..

Streamlines the path from prototype to production by providing tools to debug, evaluate, and monitor AI applications efficiently, reducing time-to-market.

Ensures AI applications consistently perform as expected through continuous monitoring, proactive issue detection, and rigorous evaluation processes.

Monitors production performance and alerts on regressions, enabling teams to address problems before they impact users or incur significant costs.

Provides comprehensive data and insights from every LLM interaction, empowering teams to make informed decisions for continuous model and prompt optimization.

Helps optimize LLM usage and identify inefficiencies, leading to better resource allocation and lower operational expenses for AI applications.

Trace specific user interactions to pinpoint why a chatbot generated an irrelevant or incorrect response, identifying issues in prompt, context, or tool usage.

Rigorously test and compare the performance of new LLM models or prompt engineering strategies against golden datasets before deploying to production.

Continuously track key performance indicators like latency, token usage, and error rates for AI agents in production, with alerts for anomalies.

Run controlled experiments to compare the effectiveness and cost-efficiency of different LLM providers, model versions, or prompt variations.

Gain full visibility into the retrieval and generation steps of RAG applications, ensuring accurate context grounding and preventing hallucinations.

Monitor token usage and API calls across different LLM applications to identify cost-saving opportunities and optimize resource allocation.

Get new AI tools weekly

Join readers discovering the best AI tools every week.