Lakera Guard

Last updated:

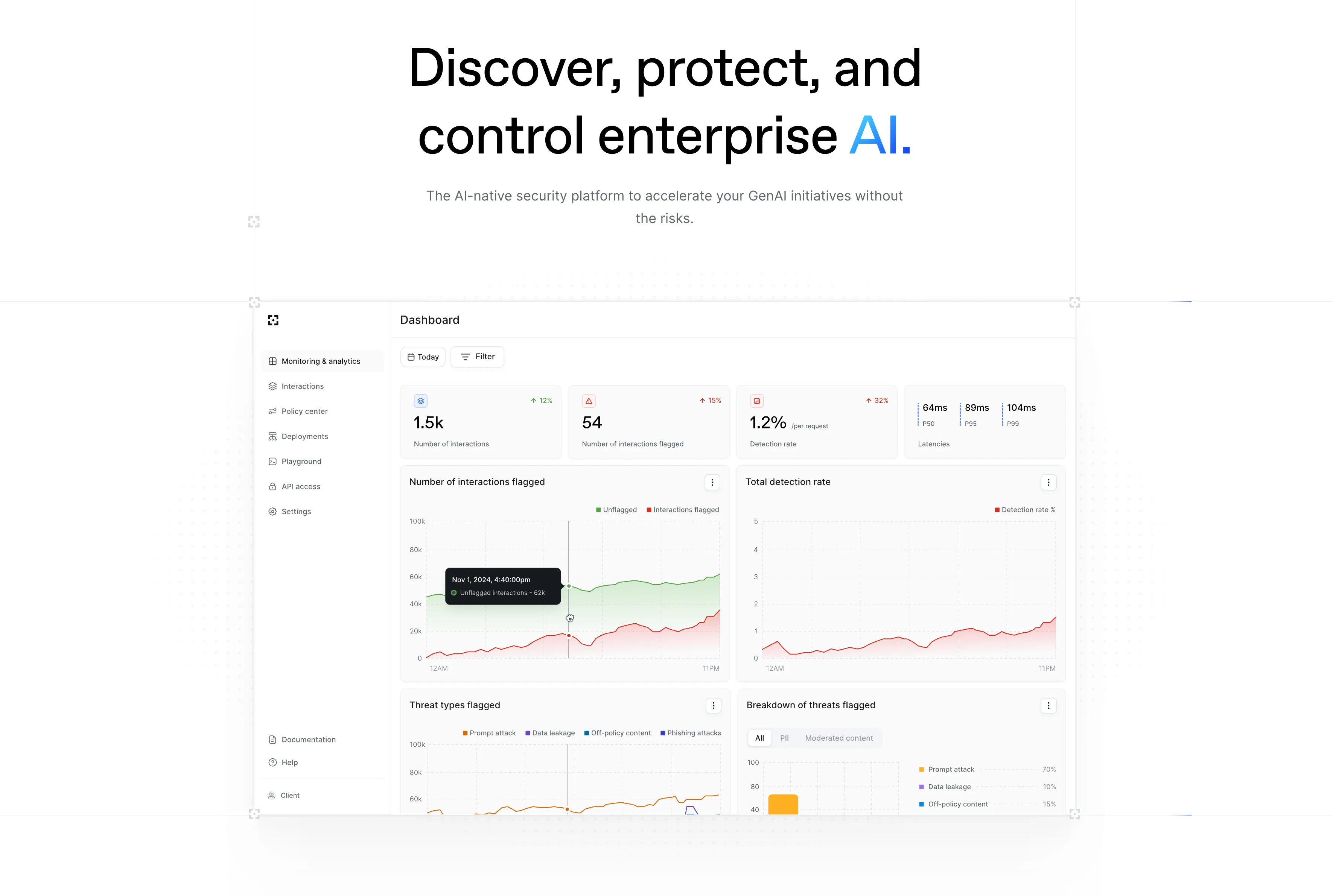

Lakera Guard is an advanced AI security platform designed to safeguard Large Language Model (LLM) applications against a spectrum of emerging threats. It provides real-time detection and mitigation capabilities for risks such as prompt injections, data exfiltration, jailbreaks, and the generation of unsafe or PII-laden content. By offering a robust API and a comprehensive analytics dashboard, Lakera Guard empowers businesses to deploy secure, compliant, and trustworthy generative AI solutions, protecting both their data and reputation.

What It Does

Lakera Guard functions as a real-time security layer for LLM applications, intercepting both user inputs and LLM outputs via an API. It analyzes these interactions using sophisticated models to detect a wide range of threats, including prompt injections, data exfiltration attempts, and unsafe content. Upon detection, it provides a confidence score, enabling developers to block malicious requests or flag risky responses, thereby protecting the underlying LLM and sensitive data.

Pricing

Pricing Plans

Free for developers to get started with basic LLM security features.

- Up to 100k requests/month

- 1 user

- Community support

- Basic analytics

For small teams and growing applications requiring more robust security features.

- Increased request limits

- 1-5 users

- Email support

- Advanced analytics

- API access

Comprehensive security solution for large organizations with advanced needs.

- Unlimited requests

- Unlimited users

- Dedicated support

- Custom integrations

- SLA

- +1 more

Key Features

Lakera Guard offers an API-first design for seamless integration into existing LLM workflows, providing real-time threat detection against prompt injections, jailbreaks, and data exfiltration. It includes robust PII and sensitive content detection to ensure data privacy and compliance. The platform also features an analytics dashboard for monitoring security posture and customizable rules to tailor protection policies, alongside managed threat intelligence.

Target Audience

This tool is ideal for enterprises, developers, and security teams building and deploying generative AI applications, particularly those utilizing large language models. It caters to organizations that prioritize AI safety, data privacy, regulatory compliance (e.g., GDPR, HIPAA), and brand reputation when integrating AI into their products or operations.

Value Proposition

Lakera Guard offers unparalleled security for LLM applications by providing a dedicated, real-time defense against complex AI-specific threats that traditional security measures miss. It solves the critical problem of safely deploying generative AI at scale, enabling businesses to innovate with confidence while ensuring compliance and protecting sensitive data. This proactive approach minimizes reputational damage and financial risks associated with AI vulnerabilities.

Use Cases

Securing AI chatbots, protecting customer-facing LLM applications, ensuring data privacy in GenAI interactions, and preventing misuse of AI models in enterprise environments.

Frequently Asked Questions

Lakera Guard offers a free plan with limited features. Paid plans are available for additional features and capabilities. Available plans include: Free Tier, Developer, Enterprise.

Lakera Guard functions as a real-time security layer for LLM applications, intercepting both user inputs and LLM outputs via an API. It analyzes these interactions using sophisticated models to detect a wide range of threats, including prompt injections, data exfiltration attempts, and unsafe content. Upon detection, it provides a confidence score, enabling developers to block malicious requests or flag risky responses, thereby protecting the underlying LLM and sensitive data.

Lakera Guard is best suited for This tool is ideal for enterprises, developers, and security teams building and deploying generative AI applications, particularly those utilizing large language models. It caters to organizations that prioritize AI safety, data privacy, regulatory compliance (e.g., GDPR, HIPAA), and brand reputation when integrating AI into their products or operations..

Get new AI tools weekly

Join readers discovering the best AI tools every week.