Evalsone

Last updated:

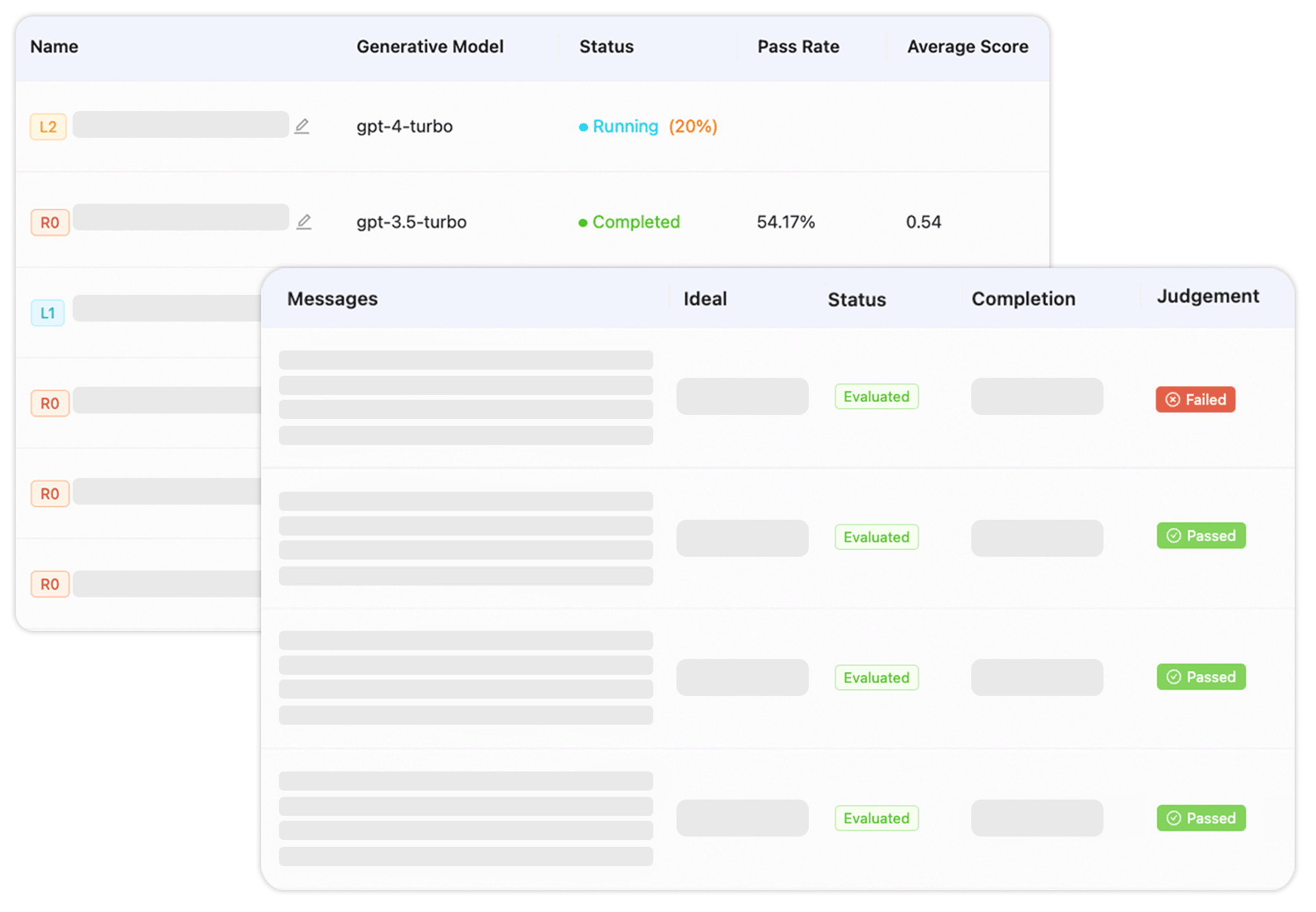

Evalsone is a specialized platform designed for the comprehensive evaluation, optimization, and monitoring of generative AI applications, including Large Language Models (LLMs). It equips AI developers, ML engineers, and product managers with robust tools for rigorous testing, bias detection, and performance benchmarking, ensuring the quality, reliability, and ethical deployment of AI systems. The platform provides actionable insights to accelerate development cycles, mitigate risks associated with generative AI, and maintain model performance in production environments. It acts as a critical layer for MLOps, focusing specifically on the unique challenges presented by generative AI.

What It Does

Evalsone enables users to define custom evaluation criteria and create comprehensive test cases for their generative AI models. It automates the execution of these tests, seamlessly integrating into existing CI/CD pipelines, and offers robust analysis tools to detect biases, track performance, and identify areas for optimization. This holistic approach ensures that AI applications meet desired quality, safety, and performance standards both before and after deployment, providing continuous feedback for model improvement.

Pricing

Key Features

Evalsone offers a comprehensive test suite with both pre-built and customizable tests, alongside advanced bias and fairness detection capabilities to identify and mitigate harmful outputs. It provides performance benchmarking to compare different model iterations and offers actionable insights through intuitive dashboards and reports. The platform also supports human-in-the-loop feedback mechanisms and seamless integrations with popular ML frameworks and APIs, coupled with robust AI observability for deployed models.

Target Audience

Evalsone is primarily designed for AI development teams, including ML engineers, data scientists, and product managers responsible for building, deploying, and maintaining generative AI applications. It caters to organizations that prioritize the quality, safety, ethical compliance, and long-term reliability of their AI solutions, particularly those working with LLMs and other generative models.

Value Proposition

Evalsone provides unique value by offering a centralized, comprehensive platform for end-to-end generative AI evaluation, moving beyond rudimentary testing to ensure production readiness and sustained performance. It solves critical problems like ensuring model reliability, detecting subtle biases, and maintaining performance in production, significantly reducing development risks and accelerating the safe, ethical deployment of AI innovations.

Use Cases

Validating new AI models, monitoring deployed models, comparing different models, improving prompt engineering, and ensuring responsible AI.

Frequently Asked Questions

Evalsone is a paid tool.

Evalsone enables users to define custom evaluation criteria and create comprehensive test cases for their generative AI models. It automates the execution of these tests, seamlessly integrating into existing CI/CD pipelines, and offers robust analysis tools to detect biases, track performance, and identify areas for optimization. This holistic approach ensures that AI applications meet desired quality, safety, and performance standards both before and after deployment, providing continuous feedback for model improvement.

Evalsone is best suited for Evalsone is primarily designed for AI development teams, including ML engineers, data scientists, and product managers responsible for building, deploying, and maintaining generative AI applications. It caters to organizations that prioritize the quality, safety, ethical compliance, and long-term reliability of their AI solutions, particularly those working with LLMs and other generative models..

Get new AI tools weekly

Join readers discovering the best AI tools every week.