Contentmod

Last updated:

Contentmod is an AI-powered API designed for comprehensive text and image moderation, enabling businesses and platforms to automatically detect and filter harmful content. It helps maintain a safe and compliant online environment by identifying profanity, hate speech, sexually explicit material, violence, and Personally Identifiable Information (PII) across multiple languages. This tool is ideal for developers and companies needing to integrate robust content safety features directly into their applications and services, ensuring a secure user experience at scale. Contentmod stands out by offering real-time analysis, extensive customization options, and an API-first approach for seamless integration.

What It Does

Contentmod provides a powerful REST API that allows platforms to submit user-generated text and images for automated analysis. Using advanced AI models, it quickly scans content against predefined categories of harm, such as hate speech, profanity, nudity, and violence. The API returns detailed moderation scores and labels, enabling real-time filtering or flagging of inappropriate content before it impacts users. This functionality helps automate a critical, labor-intensive process, making online environments safer and more compliant.

Pricing

Pricing Plans

Explore Contentmod's capabilities with a limited number of free API calls.

- 5,000 API calls

- Basic moderation

Ideal for small to medium-sized platforms requiring robust content moderation.

- 100,000 API calls/month

- Real-time Text & Image Moderation

- Custom Policies

- Analytics Dashboard

- Multi-language Support

Designed for growing platforms with higher content volume and advanced support needs.

- 500,000 API calls/month

- All Starter features

- Priority Support

Tailored solutions for large organizations with specific requirements and high-volume usage.

- Custom API calls

- Dedicated Support

- Custom Models

- Advanced Integrations

Core Value Propositions

Automated Content Safety

Reduces manual moderation workload by automatically detecting and filtering harmful content in real-time, saving time and resources.

Enhanced User Trust

Creates a safer online environment, fostering user engagement and loyalty by proactively removing inappropriate content.

Scalable & Flexible Moderation

Handles high volumes of user-generated content effortlessly and adapts to specific platform needs through customizable policies.

Global Compliance & Reach

Supports multiple languages and customizable rules, helping platforms comply with regional regulations and cater to a global audience.

Use Cases

Moderating Social Media Feeds

Automatically filters harmful text and images from user posts, comments, and profiles on social networking sites.

Securing Gaming Chats

Detects and blocks profanity, hate speech, and harassment in real-time within in-game chats and forums, improving player experience.

Filtering E-commerce Reviews

Screens product reviews, questions, and images for inappropriate content, spam, or PII before they are published.

Enhancing Dating App Safety

Moderates user-generated content in profiles and messages to identify sexually explicit material, harassment, or abusive language.

Monitoring Online Learning Platforms

Ensures academic integrity and a safe learning environment by moderating discussions and submitted content for inappropriate material.

Technical Features & Integration

Real-time Text Moderation

Detects profanity, hate speech, harassment, PII, and more in text content instantly across multiple languages, preventing harmful interactions.

AI Image Moderation

Automatically identifies nudity, sexually explicit material, violence, gore, and hate symbols in images, safeguarding visual content.

Multi-language Support

Performs content analysis effectively across numerous languages, making it suitable for global platforms and diverse user bases.

Custom Content Policies

Allows businesses to define and fine-tune moderation rules, including custom blacklists and whitelists, to match specific brand and community standards.

Developer-friendly REST API

Offers a straightforward and well-documented REST API for quick and easy integration into existing applications and workflows.

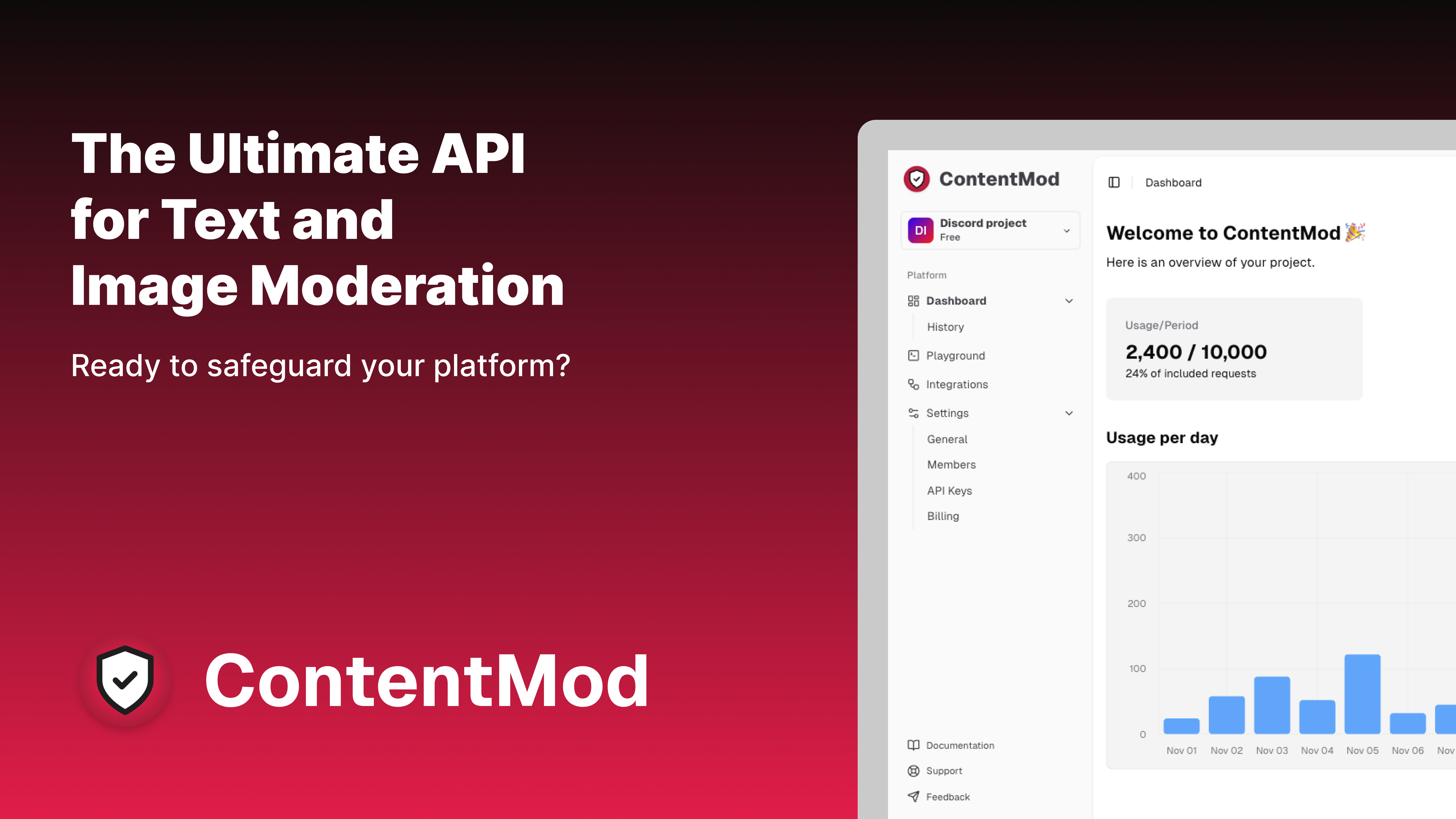

Analytics Dashboard

Provides a comprehensive dashboard to monitor moderation activity, view flagged content, and gain insights into content trends and policy effectiveness.

PII Detection

Automatically identifies and flags Personally Identifiable Information (PII) in both text and images, enhancing user privacy and compliance.

Target Audience

Contentmod primarily targets businesses and platforms that handle large volumes of user-generated content, including social media networks, online communities, gaming platforms, and e-commerce marketplaces. Developers looking to integrate robust content moderation capabilities into their applications will find its API-first approach highly beneficial. It's also valuable for educational platforms and customer support systems needing to maintain a safe and respectful communication environment.

Frequently Asked Questions

Contentmod offers a free plan with limited features. Paid plans are available for additional features and capabilities. Available plans include: Free Trial, Starter, Growth, Enterprise.

Contentmod provides a powerful REST API that allows platforms to submit user-generated text and images for automated analysis. Using advanced AI models, it quickly scans content against predefined categories of harm, such as hate speech, profanity, nudity, and violence. The API returns detailed moderation scores and labels, enabling real-time filtering or flagging of inappropriate content before it impacts users. This functionality helps automate a critical, labor-intensive process, making online environments safer and more compliant.

Key features of Contentmod include: Real-time Text Moderation: Detects profanity, hate speech, harassment, PII, and more in text content instantly across multiple languages, preventing harmful interactions.. AI Image Moderation: Automatically identifies nudity, sexually explicit material, violence, gore, and hate symbols in images, safeguarding visual content.. Multi-language Support: Performs content analysis effectively across numerous languages, making it suitable for global platforms and diverse user bases.. Custom Content Policies: Allows businesses to define and fine-tune moderation rules, including custom blacklists and whitelists, to match specific brand and community standards.. Developer-friendly REST API: Offers a straightforward and well-documented REST API for quick and easy integration into existing applications and workflows.. Analytics Dashboard: Provides a comprehensive dashboard to monitor moderation activity, view flagged content, and gain insights into content trends and policy effectiveness.. PII Detection: Automatically identifies and flags Personally Identifiable Information (PII) in both text and images, enhancing user privacy and compliance..

Contentmod is best suited for Contentmod primarily targets businesses and platforms that handle large volumes of user-generated content, including social media networks, online communities, gaming platforms, and e-commerce marketplaces. Developers looking to integrate robust content moderation capabilities into their applications will find its API-first approach highly beneficial. It's also valuable for educational platforms and customer support systems needing to maintain a safe and respectful communication environment..

Reduces manual moderation workload by automatically detecting and filtering harmful content in real-time, saving time and resources.

Creates a safer online environment, fostering user engagement and loyalty by proactively removing inappropriate content.

Handles high volumes of user-generated content effortlessly and adapts to specific platform needs through customizable policies.

Supports multiple languages and customizable rules, helping platforms comply with regional regulations and cater to a global audience.

Automatically filters harmful text and images from user posts, comments, and profiles on social networking sites.

Detects and blocks profanity, hate speech, and harassment in real-time within in-game chats and forums, improving player experience.

Screens product reviews, questions, and images for inappropriate content, spam, or PII before they are published.

Moderates user-generated content in profiles and messages to identify sexually explicit material, harassment, or abusive language.

Ensures academic integrity and a safe learning environment by moderating discussions and submitted content for inappropriate material.

Get new AI tools weekly

Join readers discovering the best AI tools every week.