Beam AI

Last updated:

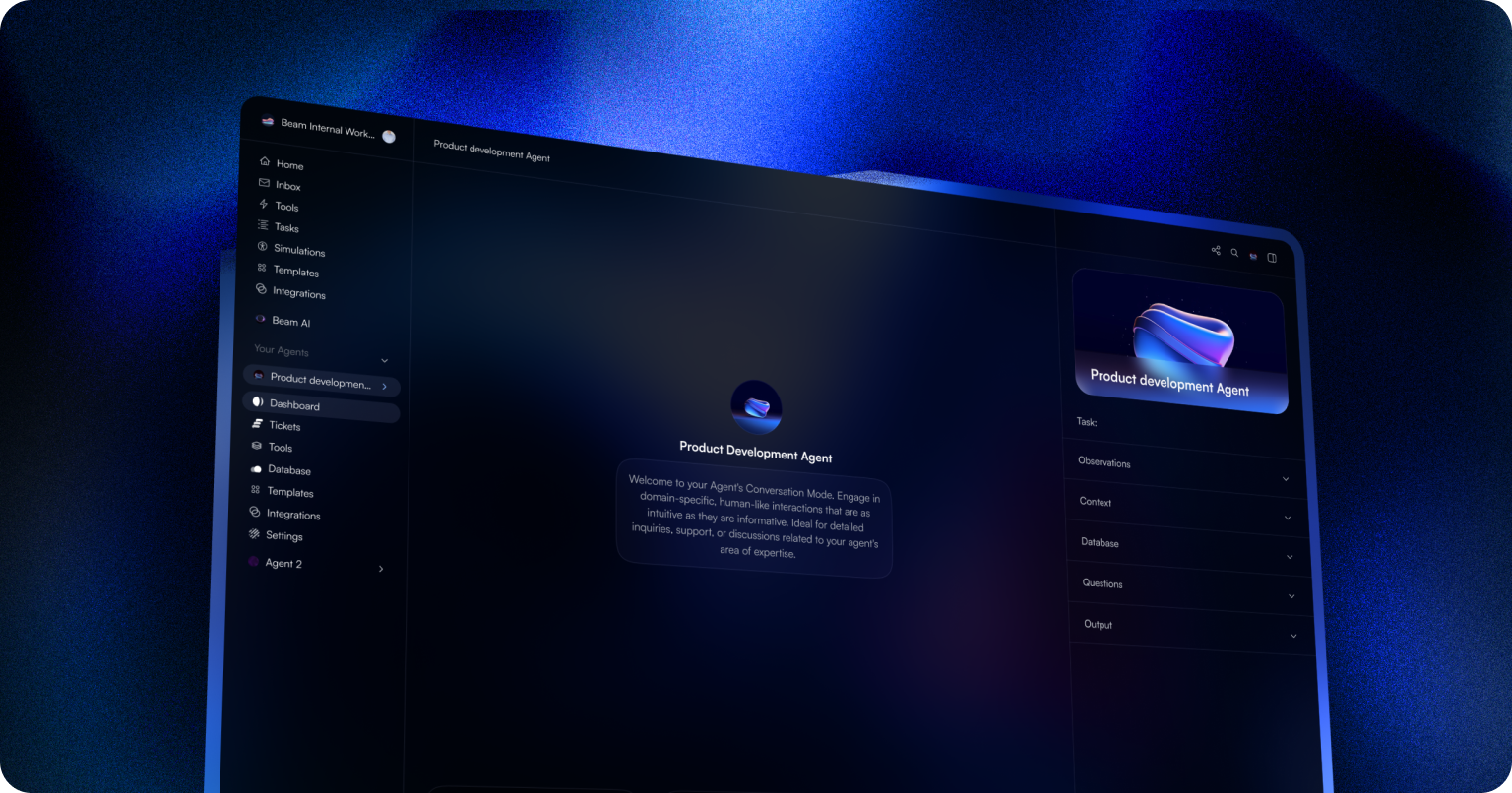

Beam AI is a leading serverless platform designed for the effortless deployment, scaling, and management of advanced AI models and complex agentic workflows. It provides a robust infrastructure that abstracts away the complexities of GPU management and MLOps, enabling developers and data scientists to focus on building innovative AI applications. The platform supports various AI frameworks and offers comprehensive tools for orchestration, memory management, and observability.

What It Does

Beam AI provides a cloud-native environment where users can deploy any AI model, from large language models to custom fine-tuned models, and orchestrate multi-step AI agents with persistent memory and tool integration. It handles the underlying serverless GPU infrastructure, automatically scaling resources to meet demand, and offers a Python SDK and API for seamless integration into existing development workflows.

Pricing

Pricing Plans

Start for free with a generous tier, then pay only for the compute, storage, and bandwidth you consume beyond the free limits.

- Free tier for small projects

- Usage-based billing for GPU hours

- Usage-based billing for storage

- Usage-based billing for bandwidth

Tailored solutions for large organizations requiring dedicated resources, custom support, and advanced enterprise-grade features.

- Dedicated support

- Custom SLAs

- Advanced security features

- Volume discounts

Core Value Propositions

Accelerated AI Deployment

Quickly take AI models from development to production without worrying about infrastructure setup or scaling challenges.

Seamless AI Agent Management

Build, deploy, and monitor complex, multi-step AI agents with integrated tools for memory and orchestration, simplifying advanced automation.

Scalable, Cost-Effective Infrastructure

Leverage serverless GPU resources that auto-scale to demand, ensuring optimal performance while only paying for what you use.

Enhanced Developer Productivity

Focus on AI innovation with a developer-friendly platform, Python SDK, and API that streamline the entire MLOps workflow.

Use Cases

LLM Inference & APIs

Deploy large language models as scalable APIs for applications like chatbots, content generation, and semantic search.

Generative AI Deployment

Run and scale generative models such as Stable Diffusion or DALL-E for creating images, video, or text-based content.

Complex AI Agent Workflows

Build and manage autonomous AI agents that perform multi-step tasks, interact with external tools, and maintain conversational memory.

Custom Model Fine-tuning

Utilize GPU resources to fine-tune pre-trained models or train custom machine learning models on specific datasets efficiently.

Real-time AI Applications

Power low-latency AI applications like real-time fraud detection, personalized recommendations, or interactive voice assistants.

Automated Data Processing

Execute batch processing jobs with AI models for tasks such as data classification, anomaly detection, or large-scale content moderation.

Technical Features & Integration

Serverless GPU Infrastructure

Access high-performance GPUs on demand without managing servers, ensuring optimal resource allocation and cost efficiency for AI workloads.

AI Agent Orchestration

Build and manage complex, multi-step AI agents with integrated memory, tools, and long-running processes, enabling sophisticated automation.

Comprehensive Observability

Gain deep insights into model performance, agent behavior, and resource utilization through integrated logs, metrics, and tracing capabilities.

Flexible Model Deployment

Deploy AI models built with any framework (PyTorch, TensorFlow, JAX, Hugging Face, custom) using Docker, providing maximum flexibility.

Python SDK & API

Integrate Beam AI seamlessly into development workflows using a powerful Python SDK and REST API for programmatic control and automation.

Persistent Storage

Store and retrieve model weights, datasets, and agent memories efficiently with integrated persistent storage solutions.

Real-time & Batch Processing

Support both low-latency real-time inference for interactive applications and high-throughput batch processing for large datasets.

Target Audience

Beam AI is primarily aimed at AI/ML engineers, data scientists, and developers in startups and enterprises who need to deploy and scale AI models and agents efficiently. It's ideal for teams looking to accelerate their AI development cycle by offloading infrastructure management and focusing on core AI innovation.

Frequently Asked Questions

Beam AI offers a free plan with limited features. Paid plans are available for additional features and capabilities. Available plans include: Pay-as-you-go, Enterprise.

Beam AI provides a cloud-native environment where users can deploy any AI model, from large language models to custom fine-tuned models, and orchestrate multi-step AI agents with persistent memory and tool integration. It handles the underlying serverless GPU infrastructure, automatically scaling resources to meet demand, and offers a Python SDK and API for seamless integration into existing development workflows.

Key features of Beam AI include: Serverless GPU Infrastructure: Access high-performance GPUs on demand without managing servers, ensuring optimal resource allocation and cost efficiency for AI workloads.. AI Agent Orchestration: Build and manage complex, multi-step AI agents with integrated memory, tools, and long-running processes, enabling sophisticated automation.. Comprehensive Observability: Gain deep insights into model performance, agent behavior, and resource utilization through integrated logs, metrics, and tracing capabilities.. Flexible Model Deployment: Deploy AI models built with any framework (PyTorch, TensorFlow, JAX, Hugging Face, custom) using Docker, providing maximum flexibility.. Python SDK & API: Integrate Beam AI seamlessly into development workflows using a powerful Python SDK and REST API for programmatic control and automation.. Persistent Storage: Store and retrieve model weights, datasets, and agent memories efficiently with integrated persistent storage solutions.. Real-time & Batch Processing: Support both low-latency real-time inference for interactive applications and high-throughput batch processing for large datasets..

Beam AI is best suited for Beam AI is primarily aimed at AI/ML engineers, data scientists, and developers in startups and enterprises who need to deploy and scale AI models and agents efficiently. It's ideal for teams looking to accelerate their AI development cycle by offloading infrastructure management and focusing on core AI innovation..

Quickly take AI models from development to production without worrying about infrastructure setup or scaling challenges.

Build, deploy, and monitor complex, multi-step AI agents with integrated tools for memory and orchestration, simplifying advanced automation.

Leverage serverless GPU resources that auto-scale to demand, ensuring optimal performance while only paying for what you use.

Focus on AI innovation with a developer-friendly platform, Python SDK, and API that streamline the entire MLOps workflow.

Deploy large language models as scalable APIs for applications like chatbots, content generation, and semantic search.

Run and scale generative models such as Stable Diffusion or DALL-E for creating images, video, or text-based content.

Build and manage autonomous AI agents that perform multi-step tasks, interact with external tools, and maintain conversational memory.

Utilize GPU resources to fine-tune pre-trained models or train custom machine learning models on specific datasets efficiently.

Power low-latency AI applications like real-time fraud detection, personalized recommendations, or interactive voice assistants.

Execute batch processing jobs with AI models for tasks such as data classification, anomaly detection, or large-scale content moderation.

Get new AI tools weekly

Join readers discovering the best AI tools every week.